http://www.gregarnette.com/blog/2012/05/cloud-servers-are-not-our-pets/ Interesting post by Greg, I never thought of it in those terms but I do remember names of servers from many previous employers…. names of planets, places, animals, … Not any more.

Author: amrith

Comparing parallel databases to sharding

I just posted an article comparing parallel databases to sharding on the ParElastic blog at http://bit.ly/JaMeVr

It was motivated by the fact that I’ve been asked a couple of times recently how the ParElastic architecture compares with sharding and it occurred to me this past weekend that

“Parallel Database” is a database architecture but sharding is an application architecture

Read the entire blog post here:

Scaling MongoDB: A year with MongoDB (Engineering at KiiP)

Here is the synopsis:

- A year with MongoDB in production

- Nine months spent in moving 95% of the data off MongoDB and onto PostgreSQL

Over the past 6 months, we’ve “scaled” MongoDB by moving data off of it.

Read the complete article here: http://bit.ly/HIQ8ox

Top 5 Challenges Migrating To a New Cloud

http://www.hightechinthehub.com/2012/04/top-5-challenges-migrating-to-a-new-cloud/

The need for standardization and some common API’s … Things like Open Stack & Eucalyptus, … maybe?

Laptop vs Mobile (from Fred Wilson’s blog)

http://feedproxy.google.com/~r/AVc/~3/v2vGh2MaFdA/laptop-vs-mobile.html

Interesting post. Now that I’m traveling more, I do face this dilemma.

The tablet (I’m using to post this) doesn’t cut it as a travel device. Not does the droid x2, nor my netbook. But since the option is straining my back and lugging a laptop along, I am making do with a netbook when I travel.

A universal dock in hotel rooms would be great. Better voice transcription would make devices better, even this tablet!

Bits: Hotel’s Free Wi-Fi Comes With Hidden Extras

http://feeds.nytimes.com/click.phdo?i=17c42a2b88a00d5651357aafaa48daae

There is no such thing as a free WiFi … If they can insert ads, what else can they insert?

Widespread Virus Proves Macs Are No Longer Safe From Hackers

My heart bleeds for all MAC users who for years thumbed their noses at us Windoze folks.

Welcome to the party!

Old memories … a foggy day on Memorial Day Weekend ’97

Was thinking about an old picture I took in 1997.

It is on flickr …

I had copies of this picture made and I used to have it hang on my office wall just as it is above. Look carefully, it is upside down … How often have you seen a duck swimmg in the air!

So Many Conferences – How Do I Choose?

http://feedproxy.google.com/~r/FeldThoughts/~3/qVn2ysRHRqY/so-many-conferences-how-do-i-choose.html

Great post by Brad Feld with some very good advice on how to figure out which of the gazillion conferences, expos and shows to attend.

SQL, NoSQL, NewSQL and now and new term …

Read all about it at C Mohan’s blog (cmohan.tumblr.com).

Mohan knows a thing or two about databases. As a matter of fact, keeping track of his database related achievements, publications and citations is in itself a big-data problem.

Strike 3 for #ATT … and I thought #verizon was bad ….

Take a read of what it is like to have a windows phone on AT&T …

http://hal2020.com/2012/03/26/strike-3-for-att/

Here I was unhappy at Verizon for not updating my two year old tablet to ice cream sandwich.

Looks like the carriers and their interference in the timely release of software is a serious problem worth considering some more during the buying decision.

Why linux on the desktop is dead

PCWorld: Why Linux on the Desktop Is Dead http://goo.gl/mag/r9i1B

I’ve tried linux several times and I return to windows each time. Sad but true …

Revisiting Network I/O APIs: The netmap Framework

Great article on High Scalability entitled Revisiting Network I/O APIs: The netmap Framework. Get the paper they reference here.

As network performance continues, the bottleneck will become the amount of time spent in moving packets between the wire (hardware) and the application (software) and vice versa. The netamp framework is an interesting approach to address this.

if this then that (@ifttt)

Great service called “if this then that” (ifttt). Allows you to create tasks based on specific triggers from one of about forty channels.

I’m thrilled that ‘starring an item’ in Google Reader is a channel.

When a trigger occurs, you can have ifttt generate a specified action.

I have one …

When I post this article, it will be automatically tweeted … Very cool, check them out!

My blog is all f’ed up

My blog has been all f’ed up for some time now, and I didn’t realize it. I’ve been reading stuff and tagging it on my tablet and in the past that used to make it pop up in an RSS feed that was displayed on my blog as ‘breadcrumbs’. But, somewhere along the way, all that fell apart.

- Maybe it was because something changed in the way the bit.ly links were shared.

- Maybe it was because the the ‘unofficial’ bit.ly client that I was using didn’t really work and therefore nothing made it to bit.ly and therefore to the RSS feed.

- And Gimmebar did one thing, and they did it well. But they didn’t do the next thing they promised (an android app).

So, from about November 2011 when Google went and wrecked Google Reader by eliminating the ‘share this’ functionality till today, all the stuff I’ve read and thought I shared is gone …

Time to use twitter as the sharing system. That seems to work. I don’t like it, but it will have to do for now.

Cloud CPU Cost over the years

Great article by Greg Arnette about the crashing cost of CPU Costs over the years, thanks to the introduction of the cloud.

http://www.gregarnette.com/blog/2011/11/a-brief-history-cloud-cpu-costs-over-the-past-5-years/

Personally, I think the most profound one was in December 2009 with the introduction of “spot pricing”.

Effectively you have an auction for the cost of an instance at any time and so long as the prevailing price is lower than the price you are willing to pay, you get to keep your instance.

Do one thing, and do it awesomely … Gimmebar!

From time to time you see a company come along that offers a simple product or service, and when they launch it just works.

The last time (that I can recall) when this happened was when I first used Dropbox. Download this little app and you got a 2GB drive in the cloud. And it worked on my Windows PC, on my Ubuntu PC, on my Android phone.

It just worked!

That was a while ago. And since then I’ve installed tons of software (and uninstalled 99% of it because it just didn’t work).

Last week I found Gimmebar.

There was no software to install, I just created an account on their web page. And it just worked!

What is Gimmebar? They consider themselves the 5th greatest invention of all time and they call themselves “a data steward”. I don’t know what that means. They also don’t tell you what the other 4 inventions are.

Here is how I would describe Gimmebar.

Gimmebar is a web saving/sharing tool that allows you to save things that you find interesting on the web in a nicely organised personal library in the cloud, and share some of that content with others if you so desire. They have something about saving stuff to your Dropbox account but I haven’t figured all of that out yet.

It has a bookmarklet for your browser, click it and things just get bookmarked and saved into your account.

But, it just worked!

I made a couple of collections, made one of them public and one of them shared.

If you share a collection it automatically gets a URL.

And that URL automatically supports an RSS Feed!

And they also backup your tweets, (I don’t give a crap about that).

So, what’s missing?

- Some way to import all your stuff (from Google Reader)

- An Android application (more generally, mobile application for platform of choice …)

- The default ‘view’ on the collections includes previews; I will have enough crap before long where the preview will be a drag. How about a way to get just a list?

- Saving a bookmark is right now at least a three click process; once you visit the site, click the bookmarklet and you get a little banner on the bottom of the screen, you click there to indicate whether you want the page to go to your private or public area, then you click the collection you want to store it in. This is functional but not easy to use.

I had one interaction with their support (little feedback tab on their page). Very quick to respond and they answered my question immediately.

On the whole, this feels like my first experience with Dropbox. Give it a shot, I think you’ll like it.

Why? Because Gimmebar set out to do one thing and they did it awesomely. It just worked!

Cloud computing explained …

I’ve heard a lot of people explain cloud computing to me and none have been as lucid as this gem.

The MongoDB rant. Truth or hoax?

Two days ago, someone called ‘nomoremongo’ posted this on Y Combinator News.

Several people (me included) stumbled upon the article, read it, and took it at face value. It’s on the Internet, it’s got to be true, right?

No, seriously. I read it, and parts of it resonated with my understanding of how MongoDB works. I saw some of the “warnings” and they seemed real. I read this one (#7) and ironically, this was the one that convinced me that this was a true post.

**7. Things were shipped that should have never been shipped** Things with known, embarrassing bugs that could cause data problems were in "stable" releases--and often we weren't told about these issues until after they bit us, and then only b/c we had a super duper crazy platinum support contract with 10gen. The response was to send up a hot patch and that they were calling an RC internally, and then run that on our data.

Who but a naive engineer would feel this kind of self-righteous outrage 😉 I’ve shared this outrage at some time in my career, but then I also saw companies ship backup software (and have a party) when they knew that restore couldn’t possibly work (yes, a hot patch), software that could corrupt data in pretty main stream circumstances (yes, a hot patch before anyone installed stuff) etc.,

I spoke with a couple of people who know about MongoDB much better than I do and they all nodded about some of the things they read. The same article was also forwarded to me by someone who is clearly knowledgeable about MongoDB.

OK, truth has been established.

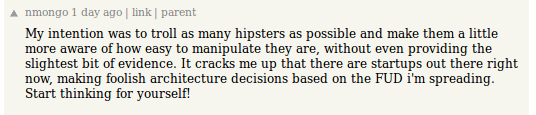

Then I saw this tweet.

Which was odd. Danny doesn’t usually swear (well, I’ve done things to him that have made him swear and a lot more but that was a long time ago). Right Danny?

Well, he had me at the “Start thinking for yourself”. But then he went off the meds, “MongoDB is the next MySQL”, really …

I think there’s a kernel of truth in the MongoDB rant. And it is certainly the case that a lot of startups are making dumb architectural decisions because someone told them that “MongoDB was web-scale”, or that “CAP Theorem told them that databases were dead”.

Was this a hoax? I don’t know. But it was certainly a reminder that all scams don’t originate in Nigeria, and don’t begin by telling me that I could make a couple of billion dollars if I just put up and couple of thousand.

On migrating from Microsoft SQL Server to MongoDB

Just reading this article http://www.wireclub.com/development/TqnkQwQ8CxUYTVT90/read describing one companies experiences migrating from SQL Server to MongoDB.

Having read the article, my only question to these folks is “why do it”?

Let’s begin by saying that we should discount all one time costs related to data migration. They are just that, one time migration costs. However monumental, if you believe that the final outcome is going to justify it, grin and bear the cost.

But, once you are in the (promised) MongoDB land, what then?

The things that this author believes that they will miss are:

- maturity

- tools

- query expressiveness

- transactions

- joins

- case insensitive indexes on text fields

Really, and you would still roll the dice in favor of a NoSQL science project. Well, then the benefits must be really really awesome! Let’s go take a look at what those are. Let’s take a look at what those are:

- MongoDB is free

- MongoDB is fast

- Freedom from rigid schemas

- ObjectID’s are expressive and handy

- GridFS for distributed file storage

- Developed in the open

OK, I’m scratching my head now. None of these really blows me away. Let’s look at these one at a time.

- MongoDB is free

- So is PostgreSQL and MySQL

- So are PostgreSQL and MySQL if you put them on the same SSD and multiple HDD’s like you claim you do with MongoDB

- I’ll give you this one, relational databases are kind of “old school” in this department

- Elastic Transparent Sharding schemes like ParElastic overcome this with Elastic Sequences which give you the same benefits. A half-way decent developer could do this for you with a simple sharded architecture.

- Replication anyone?

- Yes, MongoDB is free and developed in the open like a puppy is “free”. You just told us all the “costs” associated with this “free puppy”

So really, why do people use MongoDB? I know there are good circumstances where MongoDB will whip the pants off any relational database but I submit to you that those are the 1%.

To this day, I believe that the best description of MongoDB is this one:

http://www.xtranormal.com/watch/6995033/mongo-db-is-web-scale

Mongo DB is web scale

by: gar1t

http://www.xtranormal.com/xtraplayr/6995033/mongo-db-is-web-scale

Google went and broke Google Reader!

A very nice feature of Google Reader (my RSS reader of choice) was that there was a simple button at the bottom of each article called “Share”, and the current URL would be added to a list of shared articles and an RSS feed could be created of that list!

The breadcrumbs feature on my web page relied on that; as I read things, if I wanted to make them show up in breadcrumbs, all I did was to hit the Share button. If I visited some random URL and wanted to share that, I used the “Note in Reader” bookmarklet. All very good. Till Google went and broke it.

Now all I get is this:

This sucks!

Others seem to have noticed this as well. A collection of related news:

Trick or treat the google reader changes are coming tonight

Save the Google Reader petition

Database scalability myth (again)

A common myth that has been perpetrated is that relational database do not scale beyond two or three nodes. That, and the CAP Theorem are considered to be the reason why relational databases are unscalable and why NoSQL is the only feasible solution!

I ran into a very thought provoking article that makes just this case yesterday. You can read that entire post here. In this post, the author Srinath Perera provides an interesting template for choosing the data store for an application. In it, he makes the case that relational databases do not scale beyond 2 or 5 nodes. He writes,

The low scalability class roughly denotes the limits of RDBMS where they can be scaled by adding few replicas. However, data synchronization is expensive and usually RDBMSs do not scale for more than 2-5 nodes. The “Scalable” class roughly denotes data sharded (partitioned) across many nodes, and high scalability means ultra scalable systems like Google.

In 2002, when I started at Netezza, the first system I worked on (affectionately called Monolith) had almost 100 nodes. The first production class “Mercury” system had 108 nodes (112 nodes, 4 spares). By 2006, the systems had over 650 nodes and more recently much larger systems have been put into production. Yet, people still believe that relational databases don’t scale beyond two or three nodes!

Systems like ParElastic (Elastic Transparent Sharding) can certainly scale to much more than two or three nodes, and I’ve run prototype systems with upto 100 nodes on Amazon EC2!

Srinath’s post does contain an interesting perspective on unstructured and semi-structured data though, one that I think most will generally agree with.

All you ever wanted to know about the CAP Theorem but were scared to ask!

I just posted a longish blog post (six parts actually) about the CAP Theorem at the ParElastic blog.

http://www.parelastic.com/database-architectures/an-analysis-of-the-cap-theorem/

-amrith

Building Elastic Applications

I’ve been working on a series of blog posts (for the ParElastic blog http://www.parelastic.com/blog/) and the first of them is about building elastic applications.

You can read it here http://www.parelastic.com/database-architectures/engineering-an-elastic-application/

Embracing opposing points of view

In a blog aptly called “Both Sides of the Table”, I read a post entitled “Why You Should Embrace Opposing Views at Your Startup”.

It is a great post by Mark Suster and I highly recommend you read it.

If your startup lives in an echo chamber, and the only voice you hear is your own, it is most certainly doomed.

Dayton, OH embraces immigrants, cites entrepreneurship as a big draw

Bucking a national trend, Dayton Ohio has taken the bold step to welcome immigrants. They published a comprehensive 32 page report describing the program that was approved some days ago.

Bucking a national trend, Dayton Ohio has taken the bold step to welcome immigrants. They published a comprehensive 32 page report describing the program that was approved some days ago.

Here are some quotes that I read that I found encouraging.

According to the city, immigrants are two times more likely than others to become entrepreneurs.

1. Focus on East Third Street, generally between Keowee and Linden, as an initial international market place for immigrant entrepreneurship. East Third Street, in addition to being a primary thoroughfare between Downtown and Wright Patterson Air Force Base, also encompasses an area of organic immigrant growth and available space to supportcontinuing immigrant entrepreneurship.

2. Create an inclusive community-wide campaign around immigrant entrepreneurship that facilitates startup businesses, opens global markets and restores life to Dayton neighborhoods.

Other coverage of this and related issues can also be found here:

Running databases in virtualized environments

I have long believed that databases can be successfully deployed in virtual machines. Among other things, that is one of the central ideas behind ParElastic, a start-up I helped launch earlier this year. Many companies (Amazon, Rackspace, Microsoft, for example) offer you hosted databases in the cloud!

But yesterday I read this post in RWW. This article talks about a report published by Principled Technologies in July 2011, a report commissioned by Intel, that

tested 12 database applications simultaneously – and all delivered strong and consistent performance. How strong? Read the case study, examine the results and testing methodology, and see for yourself.

Unfortunately, I believe that discerning readers of this report are more likely to question the conclusion(s) based on the methodology. What do you think?

A Summary of the Principled Technologies Report

In a nutshell, this report seeks to make the case that industry standard servers with virtualization can in fact deliver the performance required to run business critical database applications.

It attempts to do so by running Vware vSphere 5.0 on the newest four socket Intel Xeon E7-4870 based server and hosting 12 database applications each of which has an 80GB database in its own virtual machine. The Intel Xeon E7-4870 server is a 10 core processor with two hardware threads per core. It was clocked at 2.4GHz and 1TB of RAM (64 modules each of which had 16GB). The storage in this server was 2 disks, each of which was 146GB in size (10k SAS). In addition, an EMC Clarriion Fibre Channel SAN with some disks configured in RAID0. In total they configured 6 LUN’s each of which was 1066GB (over a TB each). They VM’s ran Windows Server 2008 R2, and SQL Server 2008 R2.

The report claims that the test that was performed was “Benchmark Factory’s TPC-H like workload”. Appendix B somewhat (IMHO) misleadingly calls this “Benchmark Factory TPC-H score”.

The result is that these twelve VM’s running against an 80GB database were able to consistently process in excess of 10,000 queries per hour each.

A comparison is made to the Netezza whitepaper that claims that the TwinFin data warehouse appliance running the “Nationwide Financial Services” workload was able to process around 2,500 queries per hour and a maximum of 10,000 queries per hour.

The report leaves the reader to believe that since the 12 VM’s in the test ran consistently more than 10,000 queries per hour, business critical applications can in fact be deployed in virtualized environments and deliver good performance.

The report concludes therefore that business critical applications can be run on virtualized platforms, deliver good performance, and reduce cost.

My opinion

While I entirely believe that virtualized database servers can produce very good performance, and while I entirely agree with the conclusion that was reached, I don’t believe that this whitepaper makes even a modestly credible case.

I ask you to consider this question, “Is the comparison with Netezza running 2,500 queries per hour legitimate?”

Without digging too far, I found that the Netezza whitepaper talks of a data warehouse with “more than 4.5TB of data”, 10 million database changes per day, 50 concurrent users at peak time and 10-15 on an average. 2,500 qph with a peak of 10k qph at month end, 99.5% completing in under one minute.

Based on the information disclosed, this comparison does not appear to be valid. Note well that I am not saying that this comparison is invalid, rather that the case has not been made sufficiently to justify it.

An important reason for my skepticism is that when processing database operations like joins between two tables, doubling the data volume quadruples the amount of computation that may be required. If you are performing three table joins, doubling the data increases the computation involved may be as much as eight times. This is the very essence of the scalability challenge with databases!

I get an inkling that this may not be a valid comparison when we look at Appendix B that states that the total test time was under 750 seconds in all cases.

This feeling is compounded when I don’t see how many concurrent queries are run against each database. Single user database performance is a whole lot better and more predictable than multi-user performance. The Netezza paper specifically talks about the multi-user concurrency performance not the single-user performance.

Reading very carefully, I did find a mention that a single server running 12 VM’s hosted the client(s) for the benchmark. Since ~15k queries were completed in under 750s, we can say that each query lasted about 0.05s. Now, those are really really short queries. Impressive but not what I would generally consider to be in the kinds of workloads that one would expect Netezza to be deployed. The Netezza report does clearly state that 99.5% completed in under one minute, which leads me to conclude that the queries being run in the subject benchmark are at least two orders of magnitude away!

Conclusion

Virtualized environments like Amazon EC2, Rackspace, Microsoft Azure, and VMWare are perfectly capable of running databases and database applications.One need only look at Amazon RDS (now with MySQL and Oracle), database.com, SQL Azure, and offerings like that to realize that this is in fact the case!

However, this report fails to make a compelling case for this. By making a comparison to a different whitepaper and simply relating the results to the “queries per hour” in the other paper causes me to question the methodology. Once readers question the method(s) used to reach a conclusion, they are likely to question the conclusion itself.

Therefore, I don’t believe that this report achieves what it set out to do.

References

You can get a copy of the white paper here, a link to scribd, or here, a link to the PDF on RWW.

This case study references a Netezza whitepaper on concurrency, which you can get here. The Netezza whitepaper is “CONCURRENCY & WORKLOAD MANAGEMENT IN NETEZZA”, and prepared by Winter Corp and sponsored by Netezza.

I have also archived copies of the two documents here and here.

A link to the TPC-H benchmark can be found on the TPC web site here.

Disclosure

In the interest of full disclosure, in the past I was an employee of Netezza, a company that is referenced in this report.

High Availability and Fault Tolerance

This article on HA and FT at ReadWriteWeb caught my eye. A while ago I used to work at Stratus and it is not often that I hear their name these days. Stratus’ Fault Tolerant systems achieve their impressive uptime by hardware redundancy.

In very simple terms, if the probability of some component or sub-system failure is p, then the probability of two failures at the same time is a much smaller p * p.

When I was at Stratus, we used to guarantee “five nines”, or an uptime of 99.999% on systems that ran credit card networks, banking systems, air traffic control systems, and so on. Systems where the cost of downtime could be measured either in hundreds of thousands or millions of dollars an hour, or in human lives potentially lost.

Before I worked at Stratus, I used to work for a Stratus Customer and my first experience with Fault Tolerance was when I received a box in the mail with a note that said something to the effect that a CPU board had failed in one of our systems (about a month ago), so please pop that board out and put this replacement board in its place.

And we hadn’t realized it, the system had been chugging along just fine!

So what does uptime % translate to in terms of hours and minutes?

99% uptime : 3.65 days of downtime per year

99.9% uptime: 8.76 hours of downtime per year

99.99% uptime: 52.56 minutes of downtime per year

99.999% uptime: 5.256 minutes of downtime per year

Stratus claims that across its customer base of 8000 servers the uptime is 99.9998%

99.9998% uptime: 63 seconds of downtime per year.

Now, that’s pretty awesome!

And when I flew into Schipol Airport, or saw containers being loaded onto ships in Singapore, or I used my American Express Credit Card, logged into AOL, or looked at my 401(k) on Fidelity, I felt pretty darn proud of it!

Oracle’s new NoSQL announcement

Oracle’s announcement of a NoSQL solution at Oracle Open World 2011 has produced a fair amount of discussion. Curt Monash blogged about it some days ago, and so did Dan Abadi. A great description of the new offering (Dan credits it to Margo Seltzer) can be found here or here. I think the announcement, and this whitepaper do in fact bring something new to the table that we’ve not had until now.

- First, the Oracle NoSQL solution extends the notion of configurable consistency in a surprising way. Solutions so far had ranged from synchronous consistency to eventual consistency. But, all solutions did speak of consistency at some point in time. Eventual consistency has been the minimum guarantee of other NoSQL solutions. The whitepaper referenced above makes this very clear and characterizes this not in terms of consistency but durability.

Oracle NoSQL Database also provides a range of durability policies that specify what guarantees the system makes after a crash. At one extreme, applications can request that write requests block until the record has been written to stable storage on all copies. This has obvious performance and availability implications, but ensures that if the application successfully writes data, that data will persist and can be recovered even if all the copies become temporarily unavailable due to multiple simultaneous failures. At the other extreme, applications can request that write operations return as soon as the system has recorded the existence of the write, even if the data is not persistent anywhere. Such a policy provides the best write performance, but provides no durability guarantees. By specifying when the database writes records to disk and what fraction of the copies of the record must be persistent (none, all, or a simple majority), applications can enforce a wide range of durability policies.

2. It sets forth a very specific set of use-cases for this product.There has been much written by NoSQL proponents about its applicability in all manners of data management situations. I find this section of the whitepaper to be particularly fact based.

The Oracle NoSQL Database, with its “No Single Point of Failure” architecture is the right solution when data access is “simple” in nature and application demands exceed the volume or latency capability of traditional data management solutions. For example, click-stream data from high volume web sites, high-throughput event processing, and social networking communications all represent application domains that produce extraordinary volumes of simple keyed data. Monitoring online retail behavior, accessing customer profiles, pulling up appropriate customer ads and storing and forwarding real-time communication are examples

of domains requiring the ultimate in low-latency access. Highly distributed applications such as real-time sensor aggregation and scalable authentication also represent domains well-suited to Oracle NoSQL Database.

Several have also observed that this position is in stark contrast to Oracle’s previous position on NoSQL. Oracle released a whitepaper written in May 2011 entitled “Debunking the NoSQL Hype”. This document has been removed from Oracles website. You can, however, find cached copies all over the internet. Ironically, the last line in that document reads,

Go for the tried and true path. Don’t be risking your data on NoSQL databases.

With all that said, this certainly seems to be a solution that brings an interesting twist to the NoSQL solutions out there, if nothing else to highlight the shortcomings of existing NoSQL solutions.

[2011-10-07] Two short updates here.

- There has been an interesting exchange on Dan Abadi’s blog (comments) between him and Margo Seltzer (the author of the whitepaper) on the definition of eventual consistency. I subscribe to Dan’s interpretation that says that perpetually returning to T0 state is not a valid definition (in the limit) of eventual consistency.

- Some kind soul has shared the Oracle “Debunking the NoSQL Hype” whitepaper here. You have to click download a couple of times and then wait 10 seconds for an ad to complete.

Great post about fundraising for startups

I just read a great post about fundraising in startups.

A brief hiatus, and we’re back!

Since early last year when I posted my last blog entry, I’ve been a bit “preoccupied”. Around that time, I started in earnest on getting a start-up off the ground. It was a winding road, and I did not get around to writing anything on this blog. Over the past several months, I have been resurrecting this blog.

The old blog (there’s still a shell there) was called Hypecycles (https://hypecycles.wordpress.com) and try as I might, I could not get http://www.hypecycles.com for this blog.

What’s with “Pizza and Code”?

The last eighteen or so months have been spent getting ParElastic off the ground. The quintessential startup is two guys working in the garage, and subsisting on Pizza! The software startup is therefore two things, Pizza and Code!

What’s ParElastic?

ParElastic is a startup that is building elastic database middleware for the cloud. Want to know more about ParElastic? Go to http://www.parelastic.com. Starting ParElastic has been an incredible education, one that can only be acquired by actually starting a company.

Over the next couple of blog posts, I will quickly cover the two or so years from mid 2009 to the present.

Enjoy!

It is 2010 and RAID5 still works …

Some years ago (2007, 2008) when I cared a little more about things like RAID and RAID recovery, I read an article in ZDNET by Robin Harris that made the case for why disk capacity increases coupled with an almost invariant URE (Unrecoverable Read Error) rate meant that RAID5 was dead in 2009. A follow-on article appeared recently, also by Robin Harris that extends the same logic and claims that RAID6 would stop working in 2019.

The crux of the argument is this. As disk drives have become larger and larger (approximately doubling in two years), the URE has not improved at the same rate. URE measures the frequency of occurrence of an Unrecoverable Read Error and is typically measured in errors per bits read. For example an URE rate of 1E-14 (10 ^ -14) implies that statistically, an unrecoverable read error would occur once in every 1E14 bits read (1E14 bits = 1.25E13 bytes or approximately 12TB).

Further, Robin argues that a RAID array (RAID5 or RAID6) is running normally when a drive suffers a catastrophic failure that prompts a reconstruction from parity. In that scenario, it is perfectly conceivable that while reading the (N-1) data drives and the parity stripe in order to rebuild the failed data drive, a single URE may occur. That URE would render the RAID volume failed.

The argument is that as disk capacities grow, and URE rate does not improve at the same rate, the possibility of a RAID5 rebuild failure increases over time. Statistically he shows that in 2009, disk capacities would have grown enough to make it meaningless to use RAID5 for any meaningful array.

So, in 2007 he wrote:

RAID 5 protects against a single disk failure. You can recover all your data if a single disk breaks. The problem: once a disk breaks, there is another increasingly common failure lurking. And in 2009 it is highly certain it will find you.

and in 2009, he wrote:

SATA RAID 6 will stop being reliable sooner unless drive vendors get their game on. More good news: one of them already has.

The logic proposed is accurate but, IMHO, incomplete. One important aspect that the analysis fails to point out is something that RAID vendors have already been doing for many years now.

When disk drives looked like this (picture at right), the predominant failure mode was the catastrophic failure. Drives either worked or didn’t work any longer. At some level, that was a reflection of the fact that the Drive Permanent Failure (DPF) frequency was significantly higher than the URE frequency, and therefore the only observed failure mode was catastrophic failure.

As drives got bigger, and certainly in 1988 when Patterson and others first proposed the notion of RAID, it made perfect sense to wait for a DPF and then begin drive reconstruction. The possibility of a URE was so low (given drive capacities) that all you had to worry about was the rebuild time, and the degraded performance during the rebuild (as I/O’s may have to be satisfied through block reconstruction).

But, that isn’t how most RAID controllers today deal with drive URE’s and drive failures. On the contrary, for some time now, RAID controllers (at least the recent ones I’ve read about) have used better methods to determine when to perform the rebuild.

Consider this alternative, that I know to be used by at least a couple of array vendors. When a drive in a RAID volume reports a URE, the array controller increments a count and satisfies the I/O by rebuilding the block from parity. It then performs a rewrite on the disk that reported the URE (potentially with verify) and if the sector is bad, the microcode will remap and all will be well.

Consider this alternative, that I know to be used by at least a couple of array vendors. When a drive in a RAID volume reports a URE, the array controller increments a count and satisfies the I/O by rebuilding the block from parity. It then performs a rewrite on the disk that reported the URE (potentially with verify) and if the sector is bad, the microcode will remap and all will be well.

When the counter exceeds some threshold, and with the disk that reported the URE still in a usable condition, the RAID controller will begin the RAID5 recovery. Robin is correct that RAID recovery after DPF is something that will become less and less useful as drive capacities grow. But, with improvements in integration of SMART and the significant improvements in the predictability of drive failures, the frequency of RAID5 and RAID6 reconstruction failures are dramatically lower than those predicted in the referenced articles as these reconstructions occur on URE and not DPF.

Look at the specifications for the RAID controller you use.

When is RAID recovery initiated? Upon the occurrence of an Unrecoverable Read Error (URE) or upon the occurrence of a Drive Permanent Failure (DPF)?

Several have proposed ZFS with multiple copies is the way to go. While it addresses the issue, I submit to you that it is at the wrong level of the stack. Mirroring at the block level, with the option to have multiple mirrors is the correct (IMHO) solution. Disk block error recovery should not be handled in the file system.

The Application Marketplace: Android’s worst enemy?

I recently got an Android (Motorola A855, aka droid) phone. I had been using a Windows based device (have been since about 2003). I was concerned about the bad reviews of poor battery life and the fact that Bluetooth Voice Dialing was not present. I figured that the latter was a software thing and could be added later. So, with some doubt, I started using my phone.

On the first day, with a battery charged overnight, I proceeded to surf the Marketplace and download a few applications. I got a Google Voice Dialer (not the one from Google), and a couple of other “marketplace” applications. I used the maps with the GPS for a short while and in about 8 hours the yellow sign of “low battery” came on. I had Google (GMAIL) synchronization set to the default (sync enabled).

Pretty crappy, I thought. My Samsung went for two days without a problem. I had activesync with server (Exchange) or GMail refresh every 5 minutes for years!

The Google Voice dialer I downloaded had some bugs (it messed up the call log pretty badly) and I got bored of the other applications I had downloaded.

Time for a hard reset and restart for the phone (just to be sure I got rid of all the gremlins. After all, I was a Windows phone user, this was a weekly ritual).

I got the update to Google Maps, set synch to continuous, downloaded the “sky map” application and charged the phone up fully. That was on Wednesday afternoon (17th). Today is the 20th and the battery is still all green on the home page.

The robustness of downloaded Android Apps

One of the things that makes the android phone so attractive (the application marketplace) is certainly a big problem. The robustness and stability of the downloaded applications cannot be guaranteed. We all realize that “your mileage may vary”. But, a quick look at the “Best Practices” on the android SDK site indicate that a badly written application can keep the CPU too busy and burn through your battery.

Maybe Android phones (and the battery life in particular) is more an issue of poorly written applications.

Apple (with the Macintosh) had a tight grip on the applications that could be released on the Mac. This helped them ensure that buggy software didn’t give the Mac a bad name. I’m sure Windows users can relate to this.

They seem to have the same control on the iPhone App Store. Maybe that’s why I don’t hear so much about crappy applications on the iPhone that crash or suck the battery dry!

Should Google take some control over the crap on the marketplace or will it all straighten itself out over time?

Punishment must fit the crime

I regularly read Dr. Dobbs Code Talk and noticed this article today. What caught my attention was not the article itself, but rather the first response to the article from Jack Woehr.

Reproduced below is a screen shot of the page that I read and Jack’s comments. Really, I ask you, is C# all that bad?

Microsoft patent 7617530, the flap about sudo

The blogosphere has been buzzing with indignation about a Microsoft patent application 7617530 that apparently was granted earlier this month. You can read the application here.

Yes, enough people have complained that this is like sudo and why did Microsoft get a patent for this. In fairness the patent does attempt to distinguish what is being claimed from sudo and provides copious references to sudo. What few have mentioned is that the thing that Microsoft patents is in fact the exact functionality that some systems like Ubuntu use to allow non-privileged users to perform privileged tasks.

In PC Magazine, Matthew Murray writes,

Because a graphical interface is not a part of sudo, it seems clear the patent refers to a Windows component and not a Linux one. The patent even references several different online sudo resources, further suggesting Microsoft isn’t trying to put anything over on anyone. The same section’s reference to “one, many, or all accounts having sufficient rights” suggests a list that sudo also doesn’t possess.

IMHO, they may be missing something here.

Let’s set that all aside. What I find interesting is this. The patent application states, and I reproduce three paragraphs of the patent application here and have highlighted three sentences (the first sentences in each paragraph).

Standard user accounts permit some tasks but prohibit others. They permit most applications to run on the computer but often prohibit installation of an application, alteration of the computer’s system settings, and execution of certain applications. Administrator accounts, on the other hand, generally permit most if not all tasks.

Not surprisingly, many users log on to their computers with administrator accounts so that they may, in most cases, do whatever they want. But there are significant risks involved in using administrator accounts. Malicious code may, in some cases, perform whatever tasks are permitted by the account currently in use, such as installing and deleting applications and files–potentially highly damaging tasks. This is because most malicious code performs its tasks while impersonating the current user of the computer–thus, if a user is logged on with an administrator account, the malicious code may perform dangerous tasks permitted by that account.

To reduce these risks, a user may instead log on with a standard user account. Logging on with a standard user account may reduce these risks because the standard user account may not have the right to permit malicious code to perform many dangerous tasks. If the standard user account does not have the right to perform a task, the operating system may prohibit the malicious code from performing that task. For this reason, using a standard user account may be safer than using an administrator account.

Absolutely! Most people don’t realize that they are logged in as users with Administrator rights and can inadvertently do damaging things.

My question is this: why is the default user created when you install Windows on a PC an administrator user? As you go through the install process, the thing asks you questions like “what is your name” and “how would you like to login to your PC”. It uses this to setup the first user on the machine. Why is that user an administrator user?

If you are smart (and if Microsoft really wanted to be good about this) the installation process would create two users. A day-to-day user who is non-Administrator, and an Administrator user.

I’m a PC and if Windows 8 comes up with an installation process that creates two users, a non-administrator user and an administrator user, then it would have been my idea. But, I don’t intend to go green holding my breath for this to happen. Someone tell me if it does.

A Report from Boston’s First “Big Data Summit”

A short write-up about last night’s Big Data Summit appeared on xconomy today.

My thanks to our sponsors, Foley Hoag LLP and the wonderful team at the Emerging Enterprise Center, Infobright, Expressor Software, and Kalido.

Wow! Google Documents can now share folders.

Boston Big Data Summit Kickoff, October 22nd 2009

Since the announcement of the Boston Big Data Summit on the 2nd of October, we have had a fantastic response. The event sold out two days ago. We figured that we could remove the tables from the room and accommodate more people. And, we sold out again. The response has been fantastic!

Since the announcement of the Boston Big Data Summit on the 2nd of October, we have had a fantastic response. The event sold out two days ago. We figured that we could remove the tables from the room and accommodate more people. And, we sold out again. The response has been fantastic!

If you have registered but you are not going to be able to attend, please contact me and we will make sure that someone on the waiting list is confirmed.

There has been some question about what “Big Data” is. Curt Monash who will be delivering the keynote and moderating the discussion at the event next week writes:

… where “Big Data” evidently is to be construed as anything from a few terabytes on up. (Things are smaller in the Northeast than in California …)

When you catch a fish (whether it is the little fish on the left or the bigger fish on the right), the steps to prepare it for the table are surprisingly similar. You may have more work to do with the big fish and you may use different tools to do it with; but the things are the same.

When you catch a fish (whether it is the little fish on the left or the bigger fish on the right), the steps to prepare it for the table are surprisingly similar. You may have more work to do with the big fish and you may use different tools to do it with; but the things are the same.

So, while size influences the situation, it isn’t only about the size!

In my opinion, whether data is “Big” or not is more of a threshold discussion. Data is “Big” if the tools and techniques being used to acquire, cleanse, pre-process, store, process and archive, are either unable to keep up, or are not cost effective.

Yes, everything is bigger in California, even the size of the mess they are in. Now, that is truly a “Big Problem”!

The 50,000 row spreadsheet, the half a terabyte of data in SQL Server, or the 1 trillion row table on a large ADBMS are all, in their own ways, “Big Data” problems.

The user with 50k rows in Excel may not want ( or be able to afford ) a solution with a “real database”, and may resort to splitting the spreadsheet into two sheets. The user with half a terabyte of SQL Server or MySQL data may adopt some home-grown partitioning or sharding technique instead of upgrading to a bigger platform, and the user with a trillion CDR’s may reduce the retention period; but they are all responding to the same basic challenge of “Big Data”.

We now have three panelists:

- Ellen Rubin, Founder & VP Products, Cloudswitch

- Larry Dennison, Ph.D. President and Founder, Lightwolf Technologies

- David Cohen, Chief Architect, Cloud Infrastructure Group, EMC Corporation.

It promises to be a fun evening.

I have some thoughts on subjects for the next meeting, if you have ideas please post a comment here.

Massachusetts Non-Compete Public Hearing

A quick update on the hearings on the non-compete legislation that was held today.

[On Sept 12, 2022 I’m salvaging this old post that I published on my old blog in 2009]

A quick update on the Public Hearings at the Joint Committee on Labor and Workforce Development held in Boston on October 7th, 2009.

Today I went to State House in Boston and testified before the Joint Committee on Labor and Workforce Development on the subject on Non-Competes in the state. The hearings today were dominated by bills that had to do with “paid sick days”. Here is the days agenda

If you were a mother and wanted to make the case for paid sick days to care for your child, what would be better than to bring your child with you when you are about to testify to the Committee on Labor and Workforce Development on a bill about paid sick days? To be fair, the child sat quietly and ate a peanut butter and jelly sandwich and at one point tried to help read out her mother’s prepared testimony.

After hearing the testimony from several people and seeing how many children there were in the room just drove home the point that many people made. When their children were sick, they had to take them along to work because they could not risk losing their jobs. That’s just wrong; I had assumed that most people had paid sick leave. Unfortunately, I learned today that this is not the case.

On MapReduce and Relational Databases – Part 1

Describes MapReduce and why WOTS (Wart-On-The-Side) MapReduce is bad for databases.

This is the first of a two-part blog post that presents a perspective on the recent trend to integrate MapReduce with Relational Databases especially Analytic Database Management Systems (ADBMS).

The first part of this blog post provides an introduction to MapReduce, provides a short description of the history and why MapReduce was created, and describes the stated benefits of MapReduce.

The second part of this blog post provides a short description of why I believe that integration of MapReduce with relational databases is a significant mistake. It concludes by providing some alternatives that would provide much better solutions to the problems that MapReduce is supposed to solve.

Continue reading “On MapReduce and Relational Databases – Part 1”

On MapReduce and Relational Databases – Part 2

This is the second of a two-part blog post that presents a perspective on the recent trend to integrate MapReduce with Relational Databases especially Analytic Database Management Systems (ADBMS).

The first part of this blog post provides an introduction to MapReduce, provides a short description of the history and why MapReduce was created, and describes the stated benefits of MapReduce.

The second part of this blog post provides a short description of why I believe that integration of MapReduce with relational databases is a significant mistake. It concludes by providing some alternatives that would provide much better solutions to the problems that MapReduce is supposed to solve.

Continue reading “On MapReduce and Relational Databases – Part 2”

Announcing the Boston Big Data Summit

Announcement for the kickoff of the Boston “Big Data Summit”. The event will be held on Thursday, October 22nd 2009 at 6pm at the Emerging Enterprise Center at Foley Hoag in Waltham, MA. Register at http://bigdata102209.eventbrite.com

The Boston “Big Data Summit” will be holding its first meeting on Thursday, October 22nd 2009 at 6pm at the Emerging Enterprise Center at Foley Hoag in Waltham, MA.

The Boston area is home to a large number of companies involved in the collection, storage, analysis, data integration, data quality, master data management, and archival of “Big Data”. If you are involved in any of these, then the meeting of the Boston “Big Data Summit” is something you should plan to attend. Save the date!

The first meeting of the group will feature a discussion of “Big Data” and the challenges of “Big Data” analysis in the cloud.

Over 120 people signed up as of October 14th 2009.

There is a waiting list. If you are registered and won’t be able to attend, please contact me so we can allow someone on the wait list to attend instead.

Seating is limited so go online and register for the event at http://bigdata102209.eventbrite.com.

The Boston “Big Data Summit” thanks the Emerging Enterprise Center at Foley Hoag LLP for their support and assistance in organizing this event.

- 6:00 PM to 7:00 PM: Networking

- 7:00 PM to 7:45 PM: Keynote by Curt Monash, President, Monash Research – Leading Industry Analyst will cover The Information Marketplace

- 7:45 PM to 8:30 PM: Panel On Big Data and Cloud Computing moderated by Curt Monash

- Panel

- Ellen Rubin, Founder & VP Products, Cloudswitch

- Larry Dennison, Ph.D. President and Founder, Lightwolf Technologies

- David Cohen, Chief Architect, Cloud Infrastructure Group, EMC Corporation.

- Panel

- 8:30 PM: Conclusion and wrap up.

The Boston “Big Data Summit” is being sponsored by Foley Hoag LLP, Infobright, Expressor Software, and Kalido

For more information about the Boston “Big Data Summit” please contact the group administrator at boston.bigdata@gmail.com

The Boston Big Data Summit is organized by Bob Zurek and Amrith (me) in partnership with the Emerging Enterprise Center at Foley Hoag LLP.

Tell me about something you failed at, and what you learnt from it.

I have been involved in a variety of interviews both at work and as part of the selection process in the town where I live. Most people are prepared for questions about their background and qualifications. But, at a whole lot of recent interviews that I have participated in, candidates looked like deer in the headlight when asked the question (or a variation thereof),

“Tell me about something that you failed at and what you learned from it”

A few people turn that question around and try to give themselves a back-handed compliment. For example, one that I heard today was,

“I get very absorbed in things that I do and end up doing an excellent job at them”

Really, why is this a failure? Can’t you get a better one?

Folks, if you plan to go to an interview, please think about this in advance and have a good answer to this one. In my mind, not being able to answer this question with a “real failure” and some “real learnings” is a disqualifier.

One thing that I firmly believe is that failure is a necessary by-product of showing initiative in just the same way as bugs are natural by-product of software development. And, if someone has not made mistakes, then they probably have not shown any initiative. And if they can’t recognize when they have made a mistake, that is scary too.

Finally, I have told people who have been in teams that I managed that it is perfectly fine to make a mistake; go for it. So long as it is legal, within company policy and in keeping with generally accepted norms of behavior, I would support them. So, please feel free to make a mistake and fail, but please, try to be creative and not make the same mistake again and again.

Oracle fined $10k for violating TPC’s fair use rules

Oracle fined $10k for violating TPC’s fair use rules.

In a letter dated September 25, 2009, the TPC fined Oracle $10k based on a complaint filed by IBM. You can read the letter here.

Recently, Oracle ran an advertisement in The Wall Street Journal and The Economist making unsubstantiated superior performance claims about an Oracle/Sun configuration relative to an official TPC-C result from IBM. The ad ran twice on the front page of The Wall Street Journal (August 27, 2009 and September 3, 2009) and once on the back cover of The Economist (September 5, 2009). The ad references a web page that contained similar information and remained active until September 16, 2009. A complaint was filed by IBM asserting that the advertisement violated the TPC’s fair use rules.

Oracle is required to do four things:

1. Oracle is required to pay a fine of $10,000.

2. Oracle is required to take all steps necessary to ensure that the ad will not be published again.

3. Oracle is required to remove the contents of the page www.oracle.com/sunoraclefaster.

4. Oracle is required to report back to the TPC on the steps taken for corrective action and the procedures implemented to ensure compliance in the future.

At the time of this writing, the link http://www.oracle.com/sunoraclefaster is no longer valid.

Can you copyright movie times?

MovieShowtimes.com, a site owned by West World Media believes that they have!

In his article, Michael Masnick relates the experience of a reader Jay Anderson who found a loophole on a web page MovieShowtimes.com and figured out how to get movie times for a given zip code. He (Jay Anderson) then contacted the company asking how he could become an affiliate and drive traffic their way and was rewarded with some legal mumbo jumbo.

First of all, I think the minion at the law firm was taking a course on “Nasty Letter Writing 101” and did a fine job. I’m no copyright expert but if I received an offer from someone to drive more traffic to my site my first answer would not be to get a lawyer involved.

Second, this whole episode could have well been featured in the book, Letters from a Nut, by Ted L. Nancy or the sequel More Letters from a Nut.

But, this reminds me of something a former co-worker told me about an incident where his daughter wrote a nice letter to a company and got her first taste of legal over zealousness. He can correct the facts and fill in the details but if I recall correctly, the daughter in question had written letters to many companies asking the usual childrens questions about how pretzels, or candy or a nice toy was made. In response some nice person in a marketing department sent a gift hamper back with a polite explanation of the process etc., But one day the little child wanted to know (if my memory serves me correctly) why M&M’s were called M&M’s. So, along went the nice letter to the address on the box. The response was a letter from the say guy who now works for MovieTimesForDummies.com explaining that M&M’s was a copyright of the so-and-so-company and any attempt to blah blah blah.

I think it is only a matter of time before MovieTimesForDummies.com releases exactly the same app that Jay Anderson wanted to, closes the loophole that he found and fires the developer who left it there in the first place.

Oh, wait, I just got a legal notice from Amazon saying that the link on this blog directing traffic to their site is a violation of something or the other …

Multithreaded File I/O (Reflections on Dr. Dobb’s article by Stefan Wörthmüller)

Thoughts on the results that Stefan Wörthmüller reports in his article on Dr. Dobb’s Journal.

I ran across an interesting article on Multi-Threaded File I/O in Dr. Dobb’s today. You can read the article at http://www.ddj.com/hpc-high-performance-computing/220300055

I was particularly intrigued by the statements on variability,

I repeated the entire test suite three times. The values I present here are the average of the three runs. The standard deviation in most cases did not exceed 10-20%. All tests have been also run three times with reboots after every run, so that no file was accessed from cache.

Initially, I thought 10-20% was a bit much; this seemed like a relatively straightforward test and variability should be low. Then I looked at the source code for the test and I’m now even more puzzled about the variability.

Get a copy of the sources here. It is a single source file and in the only case of randomization, it uses rand() to get a location into the file.

The code to do the random seek is below

if(RandomCount)

{

// Seek new position for Random access

if(i >= maxCount)

break;

long pos = (rand() * fileSize) / RAND_MAX - BlockSize;

fseek(file, pos, SEEK_SET);

}

While this is a multi-threaded program, I see no calls to srand() anywhere in the program. Just to be sure, I modified Stefan’s program as attached here. (My apologies, the file has an extension of .jpg because I can’t upload a .cpp or .zip onto this free wordpress blog. The file is a Windows ZIP file, just rename it).

///////////////////////////////////////////////////////////////////////////////

// mtRandom.cpp Amrith Kumar 2009 (amrith (dot) kumar (at) gmail (dot) com

// This program is adapted from the program FileReadThreads.cpp by Stefan Woerthmueller

// No rights reserved. Feel Free to do what ever you like with this code

// but don't blame me if the world comes to an end.

#include "Windows.h"

#include "stdio.h"

#include "conio.h"

#include

#include

#include

#include

///////////////////////////////////////////////////////////////////////////////

// Worker Thread Function

///////////////////////////////////////////////////////////////////////////////

DWORD WINAPI threadEntry(LPVOID lpThreadParameter)

{

int index = (int)lpThreadParameter;

FILE * fp;

char filename[32];

sprintf ( filename, "file-%d.txt", index );

fprintf ( stderr, "Thread %d startedn", index );

if ((fp = fopen ( filename, "w" )) == (FILE * ) NULL)

{

fprintf (stderr, "Error opening file %sn", filename );

}

else

{

for (int i = 0; i < 10; i ++)

{

fprintf ( fp, "%un", rand());

}

fclose (fp);

}

fprintf ( stderr, "Thread %d donen", index );

return 0;

}

#define MAX_THREADS (5)

int main(int argc, char* argv[])

{

HANDLE h_workThread[MAX_THREADS];

for(int i = 0; i < MAX_THREADS; i++)

{

h_workThread[i] = CreateThread(NULL, 0, threadEntry, (LPVOID) i, 0, NULL );

Sleep(1000);

}

WaitForMultipleObjects(MAX_THREADS, h_workThread, TRUE, INFINITE);

printf ( "All done. Good byen" );

return 0;

}

So, I confirmed that Stefan will be getting the same sequence of values from rand() over and over again, across reboots.

Why then is he still seeing 10-20% variability? Beats me, something smells here … I would assume that from run to run, there should be very little variability.

Thoughts?

From the “way-back machine”

We’ve all heard the expression “way-back machine” and some of us know about tools like the Time Machine. But, did you know that there is in fact a “way-back machine” ?

From time to time, I have used this service and it is one of those nice corners of the web that is nice to know. I was reminded of it this morning in a conversation and that led to a nice walk through history.

If you aren’t familiar with the “way-back machine”, take a look at http://www.archive.org/web/web.php

Some day you may wonder what a web page looked like a while ago and the “way-back machine” is your solution.

Here are some interesting ones that I looked at today. The Time Magazine in February 1999.

Ever wondered what the Dataupia web page looked like in February 2006? I know someone who would get a kick out of it so I went and looked it up.

Check it out sometime, the way back machine is a wonderful afternoon diversion.

The “way back” archive is not complete, alas!

Diluting education standards in Kansas (part II)

Coming in the aftermath of the efforts to outlaw the teaching of evolution in the state, this story about Kansas is unfortunate.

http://blog.acm.org/archives/csta/2009/09/post_4.html

http://usacm.acm.org/usacm/weblog/index.php?p=741

The state has significant employment problems and the recent down turn in the economy has caused significant impact on the aircraft industry in the state. With a nascent IT start-up scene there, this is probably the worst publicity that the state could have hoped for.

Who are you, really? The value of incorrect response in challenge-response style authentication.

We all know how service providers validate the identity of callers. But, how do you validate the identity of the service provider on the other end of the telephone? In the area of computer security, the inexact challenge response mechanism is a useful way of validating identities; a wrong answer and the response to a wrong answer tell a lot.

Service providers (electricity, cable, wireless phone, POTS telephone, newspaper, banks, credit card companies) are regularly faced with the challenge of identifying and validating the identity of the individual who has called customer service. They have come up with elaborate schemes involving the last four digits of your social security number, your mailing address, your mother’s maiden name, your date of birth and so on. The risks associated with all of these have been discussed at great length elsewhere; social security numbers are guessable (see “Predicting Social Security Numbers from Public Data”, Acquisti and Gross), mailing addresses can be stolen, mother’s maiden names can be obtained (and in some Latin American countries your mother’s maiden name is part of your name) and people hand out their dates of birth on social networking sites without a problem!

![FAH8201[1] Bogus Parking ticket](https://i0.wp.com/www.pizzaandcode.com/wp-content/uploads/2009/09/fah82011.jpg)

So, apart from identity theft by someone guessing at your identity, we also have identity theft because people give out critical information about themselves. Phishing attacks are well documented, and we have heard of the viruses that have spread based on fake parking tickets.

Privacy and Information Security experts caution you against giving out key information to strangers; very sound advice. But, how do you know who you are talking to?

Consider these two examples of things that have happened to me.

1. I receive a telephone call from a person who identifies himself as being an investment advisor from a financial services company where I have an account. He informs me that I am eligible for a certain service that I am not utilizing and he would like to offer me that service. I am interested in this service and I ask him to tell me more. In order to tell me more, he asks me to verify my identity. He wants the usual four things and I ask him to verify in some way that he is in fact who he claims to be. With righteous indignation he informs me that he cannot reveal any account information until I can prove that I am who I claim to be. Of course, that sets me off and I tell him that I would happily identify myself to be who he thinks I am, if he can identify that he is in fact who he claims to be. Needless to say, he did not sell me the service that he wanted to.

2. I call a service provider because I want to make some change to my account. They have “upgraded their systems” and having looked up my account number and having “matched my phone number to the account”, the put me through to a real live person. After discussing how we will make the change that I want, the person then asks me to provide my address. Ok, now I wonder why that would be? Don’t they have my address, surely they’ve managed to send me a bill every month.

“For your protection, we need to validate four pieces of information about you before we can proceed”, I am told.

The four items are my address, my date of birth, the last four digits of my social security number and the “name on the account”.

Of course, I ask the nice person to validate something (for example, tell me how much my last bill was) before I proceed. I am told that for my own protection, they cannot do that.

Computer scientists have developed several techniques that provide “challenge-response” style authentication where both parties can convince themselves that they are who they claim to be. For example, public-key/private-key encryption provides a simple way in which to do this. Either party can generate a random string and provide it to the other asking the other to encrypt it using the key that they have. The encrypted response is returned to the sender and that is sufficient to guarantee that the peer does in fact posses the appropriate “token”.

Computer scientists have developed several techniques that provide “challenge-response” style authentication where both parties can convince themselves that they are who they claim to be. For example, public-key/private-key encryption provides a simple way in which to do this. Either party can generate a random string and provide it to the other asking the other to encrypt it using the key that they have. The encrypted response is returned to the sender and that is sufficient to guarantee that the peer does in fact posses the appropriate “token”.

In the context of a service provider and a customer, there would be a mechanism for the service provider to verify that the “alleged customer” is in fact the customer who he or she claims to be but the customer also verifies that the provider is in fact the real thing.

The risks in the first scenario are absolutely obvious; I recently received a text message (vector) that read

“MsgID4_X6V…@v.w RTN FCU Alert: Your CARD has been DEACTIVATED. Please contact us at 978-596-0795 to REACTIVATE your CARD. CB: 978-596-0795”

A quick web search does in fact show that this is a phishing event. Whether someone tracked that phone number down and find out if they are a poor unsuspecting victim or a perpetrator, I am not sure.

But, what does one do when in fact they receive an email or a phone call from a vendor with whom they have a relationship?

One could contact a psychic to find out if it is authentic, like check the New England SEERs.

http://twitter.com/ILNorg/status/3786484194

http://twitter.com/NewEnglandSEERs

RT @Lucy_Diamond 978-596-0795 do not return call on text. Call police or your real bank. Caution bank fraud. Never give your pin to anyone

RT @Lucy_Diamond Warning bank scam via cell phone text remember never give your pin number to anyone. Your bank won’t ask you they know it

| Agent: For your security please verify some information about your account.What is your account number

Me: Provide my account number Agent: Thank you, could you give me your passphrase? Me: ketchup Agent: Thank you. Could you give me your mother’s maiden name Me: Hoover Decker Agent: Thank you. and the last four digits of your SSN Me: 2004 Agent: Just one more thing, your date of birth please Me: February 14th 1942 Agent: Thank you |

Agent: For your security please verify some information about your account.What is your account number

Me: Provide my account number Agent: Thank you, could you give me your passphrase? Me: ketchup Agent: That’s not what I have on the account Me: Really, let me look for a second. What about campbell? Agent: No, that’s not it either. It looks like you chose something else, but similar. Me: Oh, of course, Heinz58. Sorry about that Agent: That’s right, how about your mother’s maiden name. Me: Hoover Decker Agent: No, that’s not it. Me: Sorry, Hoover Bissel Agent: That’s right. And the last four of your social please Me: 2007 Agent: thank you, and the date of birth Me: Feb 29, 1946 Agent: Thank you |

Agent: For your security please verify some information about your account.

What is your account number

Me: Provide my account number

Agent: Thank you, could you give me your passphrase?

Me: ketchup

Agent: Thank you. Could you give me your mother’s maiden name

Me: Hoover Decker

Agent: Thank you. and the last four digits of your SSN

Me: 2004

Agent: Just one more thing, your date of birth please

Me: February 14th 1942

Agent: Thank you. Could you verify the address to which you would like us to ship the package.

(At this point, I’m very puzzled and not really sure what is going on)

Me: Provided my real address (say 10 Any Drive, Somecity, 34567)

Agent: I’m sorry, I don’t see that address on the account, I have a different address.

Me: What address do you have?

Agent: I have 14 Someother Drive, Anothercity, 36789.

The address the agent provided was in fact a previous location where I had lived.

What has happened is that the cable company (like many other companies these days) has outsourced the fulfillment of the orders related to this service. In reality, all they want is to verify that the account number and the address match! How they had an old address, I cannot imagine. But, if the address had matched, they would have mailed a little package out to me (it was at no charge anyway) and no one would be any the wiser.

But, I hung up and called the cable company on the phone number on my bill and got the full fourth-degree. And they wanted to talk to “the account owner”. But, I had forgotten what I told them my SSN was … Ironically, they went right along to the next question and later told me what the last four digits of my SSN were 🙂

Someone said they were interested in the security and privacy of my personal information?

We people born on the 29th of February 1946 are very skeptical.

Faster or Free

I don’t know how Bruce Scott’s article showed up in my mailbox but I’m confused by it (happens a lot these days).

I agree with him that too much has been made about whether a system is a columnar system or a truly columnar system or a vertically partitioned row store and what really matters to a customer is TCO and price-performance in their own environment. Bruce says as much in his blog post

Let’s start talking about what customers really care about: price-performance and low cost of ownership. Customers want to do more with less. They want less initial cost and less ongoing cost.

Then, he goes on to say

On this last point, we have found that we always outperform our competitors in customer created benchmarks, especially when there are previously unforeseen queries. Due to customer confidentiality this can appear to be a hollow claim that we cannot always publicly back up with customer testimonials. Because of this, we’ve decided to put our money where our mouth is in our “Faster or Free” offer. Check out our website for details here: http://www.paraccel.com/faster_or_free.php

So, I went and looked at that link. There, it says:

Our promise: The ParAccel Analytic Database™ will be faster than your current database management system or any that you are currently evaluating, or our software license is free (Maintenance is not included. Requires an executed evaluation agreement.)

To be consistent, should that not make the promise that the ParAccel offering would provide better price-performance and lower TCO than the current system or the one being evaluated? After all, that is what customers really care about.

I’m confused. More coffee!

Oh, there’s more! Check out this link http://www.paraccel.com/cash_for_clunkers.php

Talk about fine print:

* Trade-in value is equivalent to the first year free of a three year subscription contract based on an annual subscription rate of $15K/user terabyte of data. Servers are purchased separately.